Your server works… until it doesn’t: what Docker can (and can’t) fix for legacy apps

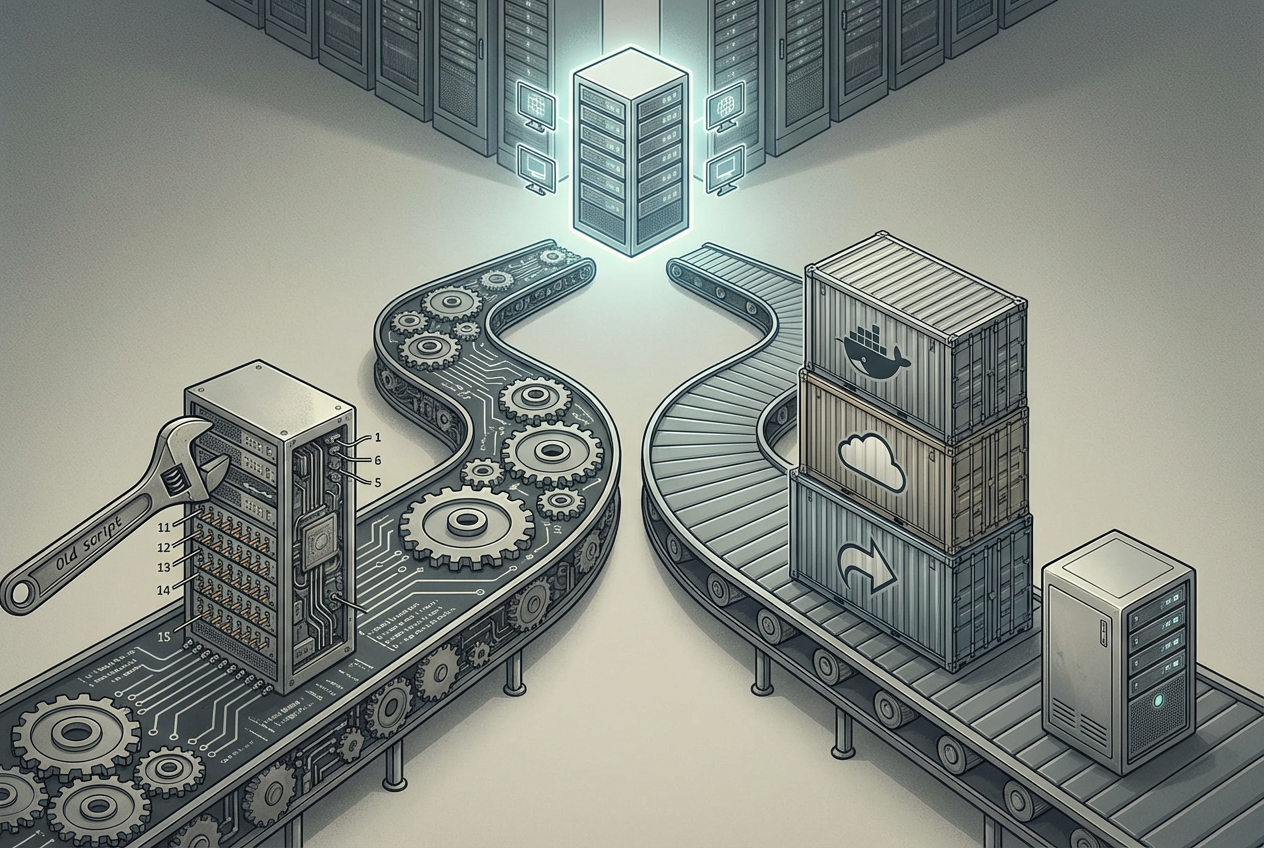

The app looks fine on the one server that’s been “the server” for years. Then you patch the OS, swap the VM, or add a new teammate, and suddenly the install notes don’t match reality. A missing package, an older runtime, a custom config file in /etc—any of it can turn a routine deploy into a late-night rollback.

Docker can help by freezing the runtime and system packages into an image you can rebuild the same way every time. It won’t fix hidden state on the host, messy data handling, or an app that only works because someone once ran a manual command.

And it adds its own friction: volumes, ports, networking, and secrets have to be handled on purpose. Before you reach for containers, it’s worth asking what you’re really trying to make repeatable.

Do you actually need a container, or just a better rebuild script?

Most teams reach for Docker right after a “works on that box” scare, but the first repeatability win is often simpler: can you rebuild the server (or a fresh VM) from scratch with one command and get the app serving traffic? If you can’t, Docker will mostly repackage the same guesswork—just inside an image.

Try writing a rebuild script or Makefile that installs OS packages, the runtime, and any build steps, then runs a smoke test. If that gets you to a clean, repeatable setup, you may not need a container yet. The trade-off is you’re still tied to a specific OS family and repo state, and “apt install” can quietly change under you unless you pin versions.

If the script keeps growing “special cases” (this Ubuntu minor version, that PHP repo, that one service config), a container starts paying off by letting you pin and ship the whole runtime. To do that well, you need to surface the mystery dependencies first.

Inventory the “mystery dependencies” before you write a Dockerfile

Those “mystery dependencies” usually show up the moment you try to rebuild on a clean machine: the app starts, then dies because a binary isn’t on PATH, a shared library is missing, or a config file on the server was doing more work than you realized. If you skip this step and jump to a Dockerfile, you’ll just play whack-a-mole in docker build logs.

Start by listing what the app actually needs at runtime, not what you remember installing. On the server, capture OS packages, language/runtime versions, and any services it talks to locally (cron, systemd units, Redis, ImageMagick, wkhtmltopdf, LDAP libs). Then inventory file-level dependencies: configs in /etc, custom CA certs, fonts, and any “one-off” scripts called by cron or deploy hooks.

The friction: you’ll find things you can’t safely bake into an image (like host cert stores or environment-specific config). Write them down anyway, because they become explicit mounts, env vars, or secrets later—before you decide what to copy into your first Dockerfile.

Your first Dockerfile: copy less, pin more, and accept an imperfect first build

That list of “things you can’t safely bake in” is exactly what keeps a first Dockerfile from turning into a trash bin. Start by containerizing only what should be the same everywhere: the base OS, the runtime, and the system packages your app needs to boot.

Copy less than you think. Copy the app code and the files required to run it, but don’t copy logs, uploads, tmp, or local config overrides. If your repo includes a .env or config/*.local, leave it out on purpose so you’re forced to pass config in at runtime. Use a .dockerignore like you’d use .gitignore: keep the build context small so rebuilds stay fast and predictable.

Pin more than you think, too. Choose a specific base image tag (not latest), pin language deps (Composer/Gemfile/package-lock), and write down any OS packages with versions when you can. The trade-off is you’ll fight older repos and broken mirrors sooner, but you’ll stop “silent upgrades” from being your deploy strategy. Aim for “builds and starts,” then tighten from there.

Running locally without faking production: ports, env vars, and one command that always works

Once the image “builds and starts,” the next failure is usually local run friction: the container boots, but nothing answers on localhost, or it answers with the wrong config. That’s almost always ports and environment. Map the app’s listening port to a predictable host port (for example, -p 8080:80), and treat every setting you used to tweak in /etc as an explicit -e (or an --env-file) so a new machine gets the same behavior.

Keep the local goal small: “serves a page and passes a smoke test,” not “identical to production.” The trade-off is you’ll miss host-level behavior (systemd units, cron timing, local mail, odd DNS), so write down what’s out-of-scope and decide if it should become another container or an external dependency.

Make one command the default and keep it boring: docker run --rm -p 8080:80 --env-file .env.local yourapp:dev. Once that works, you’re ready for the real question: where config, secrets, and data should live.

Where your data and secrets live is the whole game

That “one command” falls apart fast if you also try to cram your database files, uploaded assets, and API keys into the image. It will run once, then you’ll rebuild and wonder why user uploads vanished, or you’ll ship an image and realize it contains production secrets. The rule that keeps you out of trouble is simple: images are for code and runtime; data and secrets must survive rebuilds and be swappable per environment.

Put state on purpose. For local dev, mount a named volume for the database and a bind mount (or volume) for uploads, so a rebuild doesn’t wipe them. In a VM-style deploy, map those volumes to specific host paths you back up. The trade-off is permissions and paths: the container user may not be able to write to the mounted directory until you fix ownership, and “it works on my laptop” can turn into “permission denied” on the server.

Handle secrets the same way: pass env vars at runtime, use an env file that never hits git, and mount certs/keys as read-only files when needed. Once you do that, you’re ready to deploy something staging-like—and hit the point where Docker starts adding moving parts.

Shipping it: a staging-like deploy and the moment Docker starts adding complexity

That “staging-like” deploy usually starts as a copy of your local run command, but on a real VM: build the image in CI (or on a build box), push it to a registry, then pull and run the exact tag on the server. Keep it boring: one container for the web app, and point it at an external database (or a separate DB container with a named volume) so a redeploy doesn’t touch data. Add a smoke test endpoint and make your deploy script fail fast if it doesn’t return a 200.

The first real friction shows up around networking and restarts. On a single host you’ll want a reverse proxy (nginx/Caddy/Traefik) to own ports 80/443, do TLS, and route to the container. You’ll also want a restart policy, a log destination, and a clear place for env vars so “rebooted the VM” doesn’t become “why is the app down.”

This is the moment Docker stops being “a package” and becomes “a runtime you operate.” If the deploy now needs docker run flags nobody remembers, you’re ready for a compose file and a minimal, repeatable baseline.

A safer baseline you can build on (without pretending you’ve modernized)

A minimal, repeatable baseline starts when your deploy stops depending on remembered docker run flags. Put them in a docker compose file: the exact image tag, the ports, the env file, the read-only mounts for certs, and the named volumes for anything that must survive rebuilds. Commit the compose file, not the secrets. Add one small script that pulls the tag, runs the smoke test, then swaps the container.

This won’t make the app “cloud native.” It makes failure modes visible. The trade-off is you now own two layers: the app and the container runtime. Plan for the boring work—log rotation, disk space, volume backups, and a rollback to the previous image tag—then you can change the server without gambling.